AI Pods as a Service: Modular, Scalable, and Built for Speed

Why the headcount-driven growth model has reached its limit and what comes next

For decades, the logic of scaling software development was almost industrial: if the scope increases, you add more people. The equation seems simple, and for a long time it was sufficient. Companies grew by hiring, managed quality by creating layers of review, and controlled delivery by distributing responsibilities among increasingly specialized roles. The model worked, until the cost of growing began competing with the revenue that growth generated.

Artificial intelligence did not arrive merely to automate tasks. It arrived to make this old model economically unsustainable for those who insist on maintaining it.

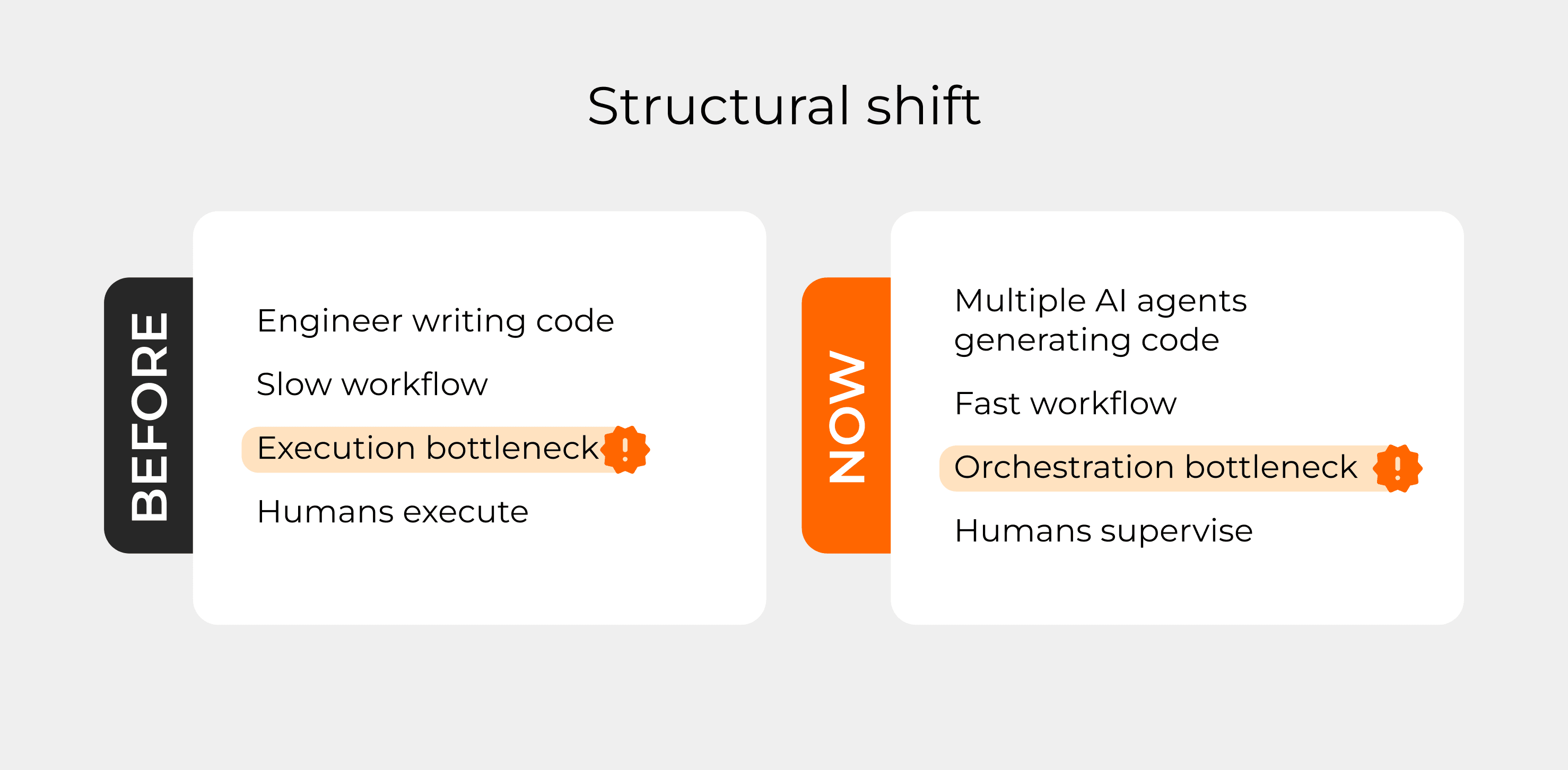

Today, significant parts of the software development cycle, testing, documentation, refactoring, code generation, structural adjustments, can be executed by agents with a level of consistency and speed that no human team can replicate at scale. Execution is no longer the bottleneck. The bottleneck has shifted to the design of the system that orchestrates that execution: what the market has begun calling the harness.

It is in this context that AI-Native development Pods become relevant. Not as a niche solution for experimental startups, but as a practical redefinition of the productive unit in software engineering.

The problem with traditional scale

The classic software services model grows on top of a simple premise: skilled people perform skilled work, and more work requires more people. The operational efficiency of a company depends on how much value it can extract from each hour worked. When that efficiency drops, due to turnover, onboarding, or communication between teams margins suffer.

What makes this model vulnerable is not its internal logic. It’s that cost scales at the same rate as capacity. There is no decoupling between the two variables. Growing revenue by 30% in this model often requires increasing headcount by 20% to 25%. The difference becomes profit.

AI breaks this link and not gradually, but structurally.

When an agent can generate, test, and document code with production-level quality, the value of an engineering hour changes in nature. Execution, which was once scarce, becomes abundant. What remains scarce is the ability to design the system that directs and validates that execution: the capacity to interpret business context, make architectural decisions, and supervise what the agents produce.

In other words, the highest-value work in software engineering has not disappeared. It has become more concentrated and more decisive.

What is an AI-Native Pod

A Pod is not a smaller team. It is a redesigned production unit.

In practice, a Pod is composed of two or three senior engineers with broad technical expertise and the real ability to orchestrate agents as part of the workflow, not as auxiliary tools, but as a central component of the SDLC. These professionals understand architecture, absorb business context deeply, and take full responsibility for delivery, from discovery to deployment.

The division of responsibilities within the Pod is clear: predictable and structured parts of development are delegated to agents. Decisions that require judgment, contextual interpretation, and evaluation of systemic impact remain human. Not because of technological limitations, but by design.

This is different from simply adding AI tools to an existing process. A traditional team that starts using coding agents does not become AI-native. It merely accelerates what it was already doing, including the structural inefficiencies of the process.

Structuring an AI-native Pod means redesigning the workflow from the ground up, considering agents as an integral part of the production chain. The result is not just speed: it is a flow with lower variability, greater delivery predictability, and a more consistent quality ceiling.

The Logic of the Harness

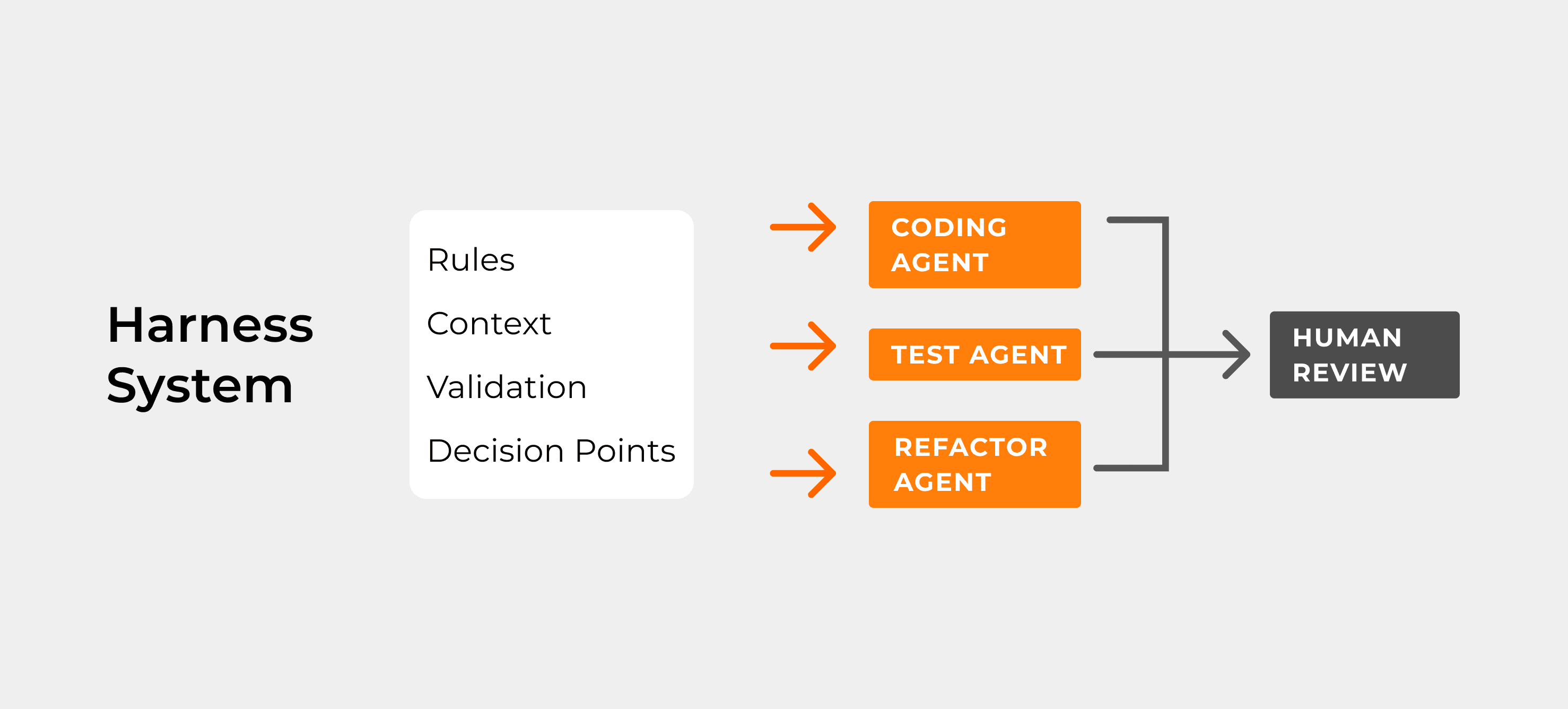

The concept of the harness, the system that surrounds and directs the work of agents, is probably the least discussed and most critical element in the adoption of AI in software development.

Adding a coding agent to an unstructured environment does not generate net gains. It simply applies speed to the status quo. Problems that previously took time to surface now appear faster. That is not productivity, it is merely the anticipation of failure.

The harness is what turns automation into leverage. It defines what agents are allowed to do, how their output is validated, where humans need to make decisions, and how context is preserved across cycles. In well-structured environments, speed and quality evolve together. In poorly structured ones, AI amplifies existing noise.

For custom software companies, this has a direct implication: competitive advantage no longer lies in having the best executors. It lies in having the best production system. And intelligent production systems require process engineering, not just code engineering.

Why this matters now

The software services market is in the middle of a transition that few companies recognize while it is happening.

Companies that continue scaling through the logic of headcount will face increasing margin pressure in the coming years. Not because they hired poorly, but because they will be competing against organizations that deliver the same quality with smaller, more responsive structures. The cost asymmetry will grow and it will become more visible.

On the other hand, the premature adoption of AI without redesigning processes creates a false sense of modernization. Having access to the best tools is not the same as having the best system. And poorly designed systems with fast AI are more dangerous than poorly designed systems with slow humans.

The moment to build that system is now before competitive pressure forces rushed decisions.

Practical implications for software companies

Some questions are worth asking before making any structural decisions:

Was the current process designed for agents, or merely adapted to accommodate them? Is there clarity about which stages of the SDLC are candidates for automation and which require human supervision?

Do the engineers on the team have the profile needed to responsibly orchestrate agents, or only to use them as assistants?

The difference between these questions is not semantic. It is the difference between a structural transformation and a superficial adoption of tools.

AI-native Pods are not the only way to respond to this transition. But they represent one of the most coherent responses for companies that develop custom software and need speed, quality, and predictability, without sacrificing any of the three.

Conclusion

AI has not made software engineering irrelevant. It has made irrelevant the logic that more people mean more capacity.

What is at stake now is not the replacement of professionals. It is the redefinition of what it means to be productive in software development. In this new model, productivity is a property of the system, not the sum of individual efforts.

AI-native Pods are a practical expression of this redefinition. They exist because value has shifted: away from execution and toward the design of the system that directs it. Toward the harness. Toward human-in-the-loop supervision with real seniority and full responsibility.

Companies that understand this early will build structural advantage. Those that wait for market pressure to force action will face a difficult gap to close, because intelligent production systems cannot be copied overnight. They are built over time, with method, and with the right people.